Some pages on a website are designed to be hidden from search results. While they are useful for users, they don’t serve a purpose for search engines.

This is where the noindex tag is useful. It tells search engines not to include a specific page in their index.

In simple terms, the page can still exist and be visited, but it will not show up in search results.

How It Works

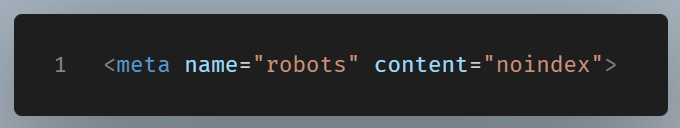

The noindex tag is part of the page’s HTML.

With the term “noindex tag” we mean the meta tag that is included in the <head> section.

The name="robots" attribute targets all well-behaved crawlers in general. If you want to address only Google’s crawler, you can write name="googlebot" instead. Consider it as sending a message to every robot listening on that channel.

The content="noindex" directive is the actual message being delivered. It tells those crawlers: “Do not include this page in your search index.” As a result, the page will not appear in search engine results pages (SERPs), even if the crawler visits and reads it.

The crawler can still access the page — it just won’t list it publicly.

When You Should Use It

There are certain types of pages that are useful but not intended to be found through search engines.

- Thank you pages after form submissions

- Login or account pages

- Duplicate content

This is not an exhaustive list. The important thing to bare in mind here is that in these cases, keeping the page out of the index helps maintain a cleaner and more focused presence in search results.

Do noindexed pages pass PageRank?

Pages that are marked as noindexed will be taken out of Google’s index. Eventually, Google will stop crawling these pages, which means that PageRank (or link juice) won’t be passed on to other pages.

Can noindex remedy duplicate content?

The noindex tag is not the tool to deal with duplicate content on a website.

To eliminate duplicate pages on your website, it’s important to use canonicalization.

By doing so, you guide search engines to index only the main (canonical) version of the page. This approach also ensures that link signals from all non-canonical versions are combined, which can significantly enhance the visibility of the canonical version.

Noindex Versus Robots.txt

It is easy to confuse noindex with robots.txt, but they serve different roles:

• robots.txt controls whether a page can be crawled

• noindex controls whether a page can be indexed

A page blocked by robots.txt may still appear in results if other sites link to it. A page with noindex will not be listed, even if it is crawled.

Final Thought

The noindex tag gives you a simple way to manage what search engines show.

Using it allows you to easily manage what appears in search engine results. It doesn’t remove content from your site; it just prevents specific pages from being listed in search resulsts.